You are browsing unreleased documentation.

Looking for the plugin's configuration parameters? You can find them in the AI Request Transformer configuration reference doc.

The AI Request Transformer plugin uses a configured LLM service to introspect and transform the client’s request body before proxying the request upstream.

This plugin supports llm/v1/chat style requests for all of the same providers as the AI Proxy plugin.

It also uses all of the same configuration and tuning parameters as the AI Proxy plugin, under the config.llm block.

The AI Request Transformer plugin runs before all of the AI Prompt plugins and the AI Proxy plugin, allowing it to also introspect LLM requests against the same, or a different, LLM.

How it works

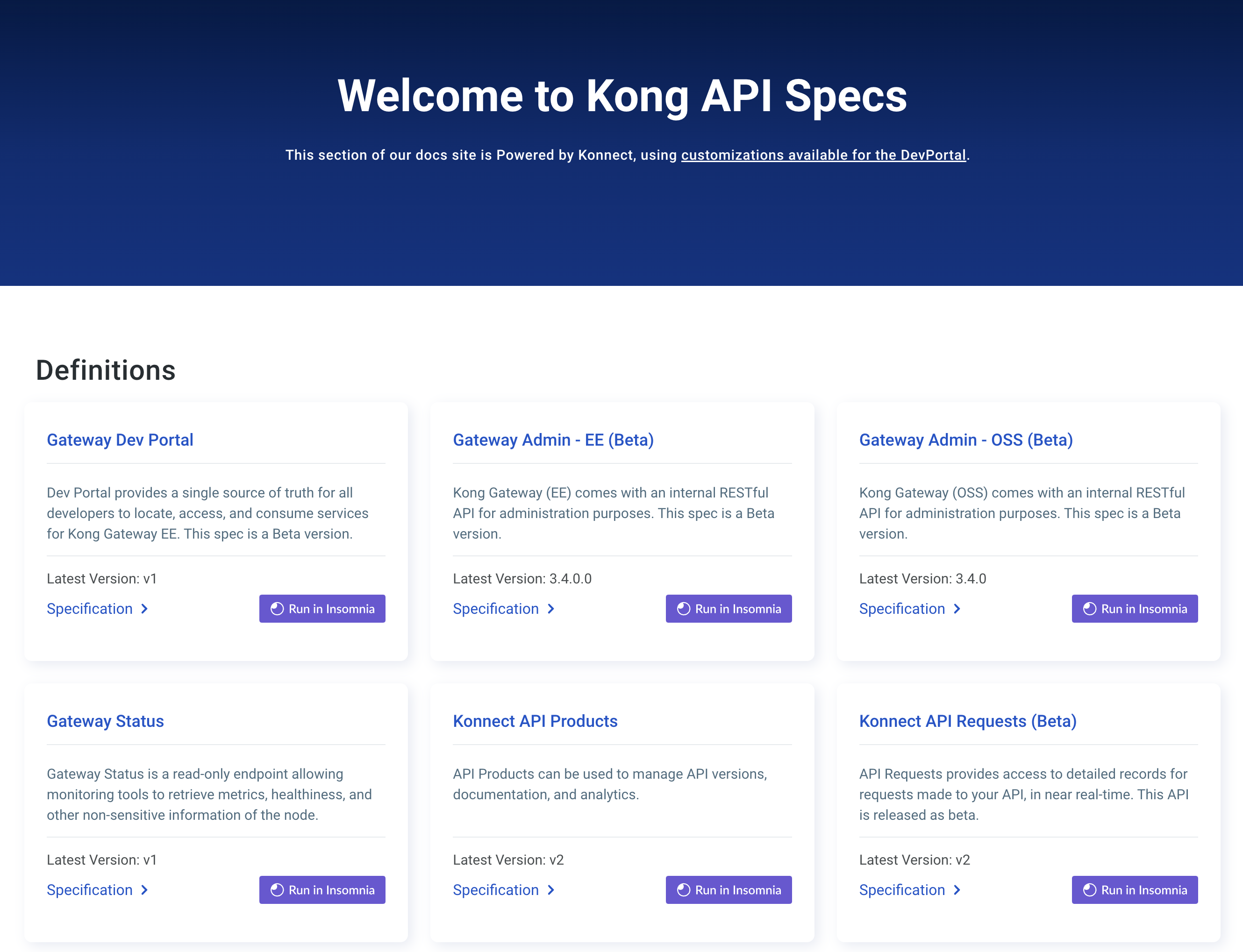

Figure 1: The diagram shows the journey of a consumer’s request through Kong Gateway to the backend service, where it is transformed by both an AI LLM service and Kong’s AI Request Transformer and the AI Response Transformer plugins.

- The Kong Gateway admin sets up an

llm:configuration block, following the same configuration format as the AI Proxy plugin, and the samedrivercapabilities. - The Kong Gateway admin sets up a

promptfor the request introspection. The prompt becomes thesystemmessage in the LLM chat request, and prepares the LLM with transformation instructions for the incoming user request body. - The user makes an HTTP(S) call.

- Before proxying the user’s request to the backend, Kong Gateway sets the entire request body as the

usermessage in the LLM chat request, and then sends it to the configured LLM service. - The LLM service returns a response

assistantmessage, which is subsequently set as the upstream request body.

Get started with the AI Request Transformer plugin

All AI Gateway plugins

- AI Proxy

-

AI Proxy Advanced Available with Kong Gateway Enterprise subscription - Contact Sales

- AI Request Transformer

- AI Response Transformer

-

AI Semantic Cache Available with Kong Gateway Enterprise subscription - Contact Sales

-

AI Semantic Prompt Guard Available with Kong Gateway Enterprise subscription - Contact Sales

-

AI Rate Limiting Advanced Available with Kong Gateway Enterprise subscription - Contact Sales

-

AI Azure Content Safety Available with Kong Gateway Enterprise subscription - Contact Sales

- AI Prompt Template

- AI Prompt Guard

- AI Prompt Decorator